Archive

MS Virtualization for VMware Pros : Jump Start

Exclusive Jump Start virtual training event – “Microsoft Virtualization for VMware Professionals” FREE – on TechNet Edge

Where do I go for this great training?

The HD-quality video recordings of this course are on TechNet Edge. If you’re interested in one specific topic, I’ve included links to each module as well.

· Entire course on TechNet Edge: “Microsoft Virtualization for VMware Professionals” Jump Start

o Virtualization Jump Start (01): Virtualization Overview

o Virtualization Jump Start (02): Differentiating Microsoft & VMware

o Virtualization Jump Start (03a): Hyper-V Deployment Options & Architecture | Part 1

o Virtualization Jump Start (03b): Hyper-V Deployment Options & Architecture | Part 2

o Virtualization Jump Start (04): High-Availability & Clustering

o Virtualization Jump Start (05): System Center Suite Overview with focus on DPM

o Virtualization Jump Start (06): Automation with Opalis, Service Manager & PowerShell

o Virtualization Jump Start (07): System Center Virtual Machine Manager 2012

o Virtualization Jump Start (08): Private Cloud Solutions, Architecture & VMM Self-Service Portal 2.0

o Virtualization Jump Start (09): Virtual Desktop Infrastructure (VDI) Architecture | Part 1

o Virtualization Jump Start (10): Virtual Desktop Infrastructure (VDI) Architecture | Part 2

o Virtualization Jump Start (11): v-Alliance Solution Overview

o Virtualization Jump Start (12): Application Delivery for VDI

· Links to course materials on Born to Learn

Hyper-V R2 and right numbers of physical NIC’s

When it comes to network configuration, be sure to provide the right number of physical network adapters on Hyper-V servers. Failure to configure enough network connections can make it appear as though you have a storage problem, particularly when using iSCSI.

Recommendation for network configuration ( number of dedicated Physical Nic’s ):

- 1 for Management. Microsoft recommends a dedicated network adapter for Hyper-V server management.

- At least 1 for Virtual machines. Virtual network configurations of the external type require a minimum of one network adapter.

- 2 for SCSI. Microsoft recommends that IP storage communication have a dedicated network, so one adapter is required and two or more are necessary to support multipathing.

- At least 1 for Failover cluster. Windows® failover cluster requires a private network.

- 1 for Live migration. This new Hyper-V R2 feature supports the migration of running virtual machines between Hyper-V servers. Microsoft recommends configuring a dedicated physical network adapter for live migration traffic. This network should be separate from the network for private communication between the cluster nodes, from the network for the virtual machine, and from the network for storage

- 1 for Cluster shared volumes. Microsoft recommends a dedicated network to support the communications traffic created by this new Hyper-V R2 feature. In the network adapter properties, Client for Microsoft Networks and File and Printer Sharing for Microsoft Networks must be enabled to support SMB

Some interesting notes when comparing FC with iSCSI:

- iSCSI and FC delivered comparable throughput performance irrespective of the load on the system.

- iSCSI used approximately 3-5 percentage points more Hyper-V R2 CPU resources than FC to achieve comparable performance.

For information about the network traffic that can occur on a network used for Cluster Shared Volumes, see “Understanding redirected I/O mode in CSV communciation” in Requirements for Using Cluster Shared Volumes in a Failover Cluster in Windows Server 2008 R2 (http://go.microsoft.com/fwlink/?LinkId=182153).

For more information on the network used for CSV communication, see Managing the network used for Cluster Shared Volumes.

It’s not recommend that you do use the same network adapter for virtual machine access and management.

If you are limited by the number of network adapters, you should configure a virtual local area network (VLAN) to isolate traffic. VLAN recommendations include 802.1q and 802.p.

Hyper-V : Virtual Hard Disks. Benefits of Fixed disks

When creating a Virtual Machine, you can select to use either virtual hard disks or physical disks that are directly attached to a virtual machine.

My personal advise and what I have seen from Microsoft folks is to always use FIXED DISK for production environment, even with the release of Windows Server 2008 R2, which one of the enhancements was the improved performance of dynamic VHD files.

The explanation and benetifts for that is simple:

1. Almost the same performance as passthroug disks

2. Portability : you can move/copy the VHD

3. Backup : you can backup at the VHD level and better, using DPM you can restore at ITEM level ( how cools is that! )

4.You can have Snapshots

5. The fixed sized VHD performance has been on-par with the physical disk since Windows Server 2008/Hyper-V

If you use pass-through disks you lose all of the benefits of VHD files such as portability, snap-shotting and thin provisioning. Considering these trade-offs, using pass-through disks should really only be considered if you require a disk that is greater than 2 TB in size or if your application is I/O bound and you really could benefit from another .1 ms shaved off your average response time.

Disks Summary table:

| Storage Container | Pros | Cons |

| Pass-through DisK |

|

|

| Fixed sized VHD |

|

|

| Dynamically expanding or

Differencing VHD

|

|

|

SCVMM 2012: Private Cloud Management. Got it!?

It’s great pleasure to see how far Microsoft SCVMM went with the SCVMM 2012.

Belevie me, it’s a whole new product.

So, if you are seriuos about Private Cloud Management, that’s the product you will look into.

•Fabric Management

◦Hyper-V and Cluster Lifecycle Management – Deploy Hyper-V to bare metal server, create Hyper-V clusters, orchestrate patching of a Hyper-V Cluster

◦Third Party Virtualization Platforms – Add and Manage Citrix XenServer and VMware ESX Hosts and Clusters

◦Network Management – Manage IP Address Pools, MAC Address Pools and Load Balancers

◦Storage Management – Classify storage, Manage Storage Pools and LUNs

•Resource Optimization

◦Dynamic Optimization – proactively balance the load of VMs across a cluster

◦Power Optimization – schedule power savings to use the right number of hosts to run your workloads – power the rest off until they are needed.

◦PRO – integrate with System Center Operations Manager to respond to application-level performance monitors.

•Cloud Management

◦Abstract server, network and storage resources into private clouds

◦Delegate access to private clouds with control of capacity, capabilities and user quotas

◦Enable self-service usage for application administrator to author, deploy, manage and decommission applications in the private cloud

•Service Lifecycle Management

◦Define service templates to create sets of connected virtual machines, os images and application packages

◦Compose operating system images and applications during service deployment

◦Scale out the number of virtual machines in a service

◦Service performance and health monitoring integrated with System Center Operations Manager

◦Decouple OS image and application updates through image-based servicing.

◦Leverage powerful application virtualization technologies such as Server App-V

Note: The SCVMM 2012 Beta is NOT Supported in production environments.

Download SCVMM 2012 Beta Now

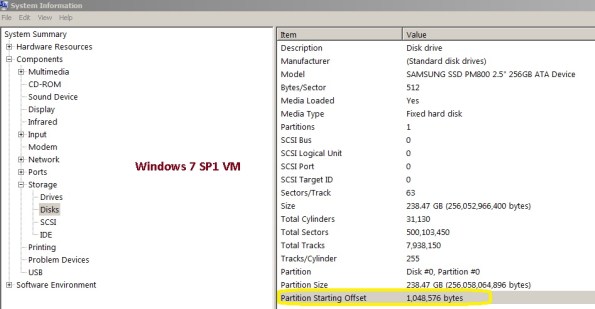

Virtual Machines that are misaligned

For existing child VMs that are misaligned, in order to correct the partition offset, a new physical disk must be created and formatted, and the data has to be migrated from the original disk to the new one.

Important note: Both Windows 7 and Windows 2008/2008R2 create aligned partition

This problem occurs when the partitioning scheme used by the host OS doesn’t match the block boundaries inside the LUN.If the guest file system is not aligned, it may become necessary to read or write twice as many blocks of storage than the guest actually requested since any guest file system block actually occupies at least two partial storage blocks.

All VHD types can be formatted with the correct offset at the time of creation by booting the child VM before installing an OS and manually setting the partition offset. . The recommended starting offset value for Windows OSs is 32768. The default starting offset value typically observed is 32256.

How to verify the Offset value:

1. Run msinfo32 on the guest VM by selecting

Start > All Programs > Accessories > System Tools > System Information.

or type Start > Run and enter the following command : MSINFO32

2. Navigate to Components > Storage > Disks and check the value for partition starting offset.

If the misaligned virtual disk is the boot partition, follow these steps:

1. Back up the VM system image.

2. Shut down the VM.

3. Attach the misaligned system image virtual disk to a different VM.

4. Attach a new aligned virtual disk to this VM.

5. Copy the contents of the system image (for example, C: in Windows) virtual disk to the new aligned virtual disk.

There are various tools that can be used to copy the contents from the misaligned virtual disk to the new aligned virtual disk:

− Windows xcopy

− Norton/Symantec™ Ghost: Norton/Symantec Ghost can be used to back up a full system image on the misaligned virtual disk and then be restored to a previously created, aligned virtual disk file system.

For Microsoft Hyper-V LUNs mapped to the Hyper-V parent partition using the incorrect LUN protocol type but with

aligned VHDs, create a new LUN using the correct LUN protocol type and copy the

contents (VMs and VHDs) from the misaligned LUN to this new LUN.

For Microsoft Hyper-V LUNs mapped to the Hyper-V parent partition using the incorrect LUN protocol type but with misaligned VHDs, create a new LUN using the correct LUN protocol type and copy the contents (VMs and VHDs) from the misaligned LUN to this new LUN.!01

Next, To set up the starting offset, follow these steps:

1. Boot the child VM with the Windows Preinstall Environment boot CD.

2. Select

Start > Run and enter the following command:

diskpart

3. Type the following into the prompt:

select disk 0

4. Type the following into the prompt:

create partition primary align=32

5. Reboot the child VM with the Windows Preinstall Environment boot CD.

6. Install the operating system as normal.

Virtual disks to be used as data disks can be formatted with the correct offset at the time of creation by using Diskpart in the VM. To align the virtual disk, follow these steps:

1. Boot the child VM with the Windows Preinstall Environment boot CD.

2. Select Start > Run and enter the following command:

diskpart

3. Determine the appropriate disk to use by typing the following into the prompt:

list disk

4. Select the correct disk by typing the following into the prompt:

select disk [#]

5. Type the following into the prompt:

create partition primary align=32

6. To exit, type the following in the prompt:

exit

7. Format the data disk as you would normally

For pass-through disks and LUNs directly mapped to the child OS, create a new LUN using the correct LUN protocol type, map the LUN to the VM, and copy the contents from the misaligned LUN to this new aligned LUN.

Microsoft Virtualization for VMware Professionals : Free Online Classes taught by two of the most respected authorities on virtualization technologies

Microsoft Virtualization for VMware Professionals – Free Online

Classes – March 29 – 31

Just one week after Microsoft Management Summit 2011 (MMS), Microsoft Learning will be hosting an exclusive three-day Jump Start class specially tailored for VMware and Microsoft virtualization technology pros. Registration for “Microsoft Virtualization for VMware Professionals” is open now and will be delivered as a FREE online class on March 29-31, 2010 from 10:00am-4:00pm PDT.

What’s the high-level overview?

This cutting edge course will feature expert instruction and real-world

demonstrations of Hyper-V and brand new releases from System Center Virtual

Machine Manager 2012 Beta (many of which will be announced just one

week earlier at MMS). Register Now!

- Day 1

will focus on “Platform” (Hyper-V, virtualization architecture, high

availability & clustering)- 10:00am– 10:30pm PDT: Virtualization 360 Overview

- 10:30am– 12:00pm: Microsoft Hyper-V Deployment Options & Architecture

- 1:00pm– 2:00pm: Differentiating Microsoft and VMware (terminology,etc.)

- 2:00pm– 4:00pm: High Availability & Clustering

- Day 2

will focus on “Management” (System Center Suite, SCVMM 2012 Beta, Opalis, Private Cloud solutions)- 10:00am – 11:00pm PDT: System Center

Suite Overview w/ focus on DPM - 11:00am – 12:00pm: Virtual Machine

Manager 2012 | Part 1 - 1:00pm – 1:30pm: Virtual

Machine Manager 2012 | Part 2 - 1:30pm – 2:30pm:

Automation with System Center Opalis & PowerShell - 2:30pm– 4:00pm: Private Cloud Solutions, Architecture & VMM SSP 2.0

- 10:00am – 11:00pm PDT: System Center

- Day 3

will focus on “VDI” (VDI Infrastructure/architecture, v-Alliance, application

delivery via VDI)- 10:00am – 11:00pm PDT: Virtual

Desktop Infrastructure (VDI) Architecture | Part 1 - 11:00am – 12:00pm: Virtual Desktop

Infrastructure (VDI) Architecture | Part 2 - 1:00pm – 2:30pm:

v-Alliance Solution Overview - 2:30pm– 4:00pm: Application Delivery for VDI

- 10:00am – 11:00pm PDT: Virtual

- Every section will be team-taught by two of the most respected authorities on virtualization technologies: Microsoft Technical Evangelist Symon Perriman and leading Hyper-V, VMware, and XEN infrastructure consultant, Corey Hynes

Who is the target audience for this training?

Suggested prerequisite skills include real-world experience with Windows Server 2008 R2,virtualization and datacenter management. The course is tailored to these types of roles:

- IT Professional

- IT Decision Maker

- Network Administrators & Architects

- Storage/Infrastructure

Administrators & Architects

How do I to register and learn more about this great training opportunity?

Register: Visit the Registration

Page and sign up for all three sessions

- Blog: Learn more from the Microsoft

Learning Blog - Twitter: Here are a few posts you can retweet:

- Mar. 29-31

“Microsoft #Virtualization for VMware Pros” @SymonPerriman Corey

Hynes http://bit.ly/JS-Hyper-V

@MSLearning #Hyper-V - @SysCtrOpalis Mar.

29-31 “Microsoft #Virtualization for VMware Pros” @SymonPerriman

Corey Hynes http://bit.ly/JS-Hyper-V

#Hyper-V - Learn all the cool new

features in Hyper-V & System Center 2012! SCVMM, Self-Service Portal 2.0, http://bit.ly/JS-Hyper-V

#Hyper-V #Opalis

- Mar. 29-31

What is a “Jump Start” course?

A “Jump Start” course is “team-taught” by two expert instructors in an engaging radio talk show style format. The idea is to deliver readiness training on strategic and emerging technologies that drive awareness at scale before Microsoft Learning develops mainstream Microsoft Official Courses (MOC) that map to certifications. All sessions are professionally recorded and distributed through MS Showcase, Channel 9, Zune Marketplace and iTunes for broader reach.

Windows 2008R2/Windows 7 SP1: Changes common to both client and server platforms

Here are the changes common to W2008R2 ad Windows 7, after applying the SP1:

1. Change to behavior of “Restore previous folders at logon” functionality

SP1 changes the behavior of the “Restore previous folders at logon” function available in the Folder Options Explorer dialog. Prior to SP1, previous folders would be restored in a cascaded position based on the location of the most recently active folder. That behavior changes in SP1 so that all folders are restored to their previous positions.

2. Enhanced support for additional identities in RRAS and IPsec

Support for additional identification types has been added to the Identification field in the IKEv2 authentication protocol. This allows for a variety of additional forms of identification (such as E-mail ID or Certificate Subject) to be used when performing authentication using the IKEv2 protocol.

3. Support for Advanced Vector Extensions (AVX)

There has always been a growing need for ever more computing power and as usage models change, processors instruction set architectures evolve to support these growing demands. Advanced Vector Extensions (AVX) is a 256 bit instruction set extension for processors. AVX is designed to allow for improved performance for applications that are floating point intensive. Support for AVX is a part of SP1 to allow applications to fully utilize the new instruction set and register extensions.

4. Improved Support for Advanced Format (512e) Storage Devices

SP1 introduces a number of key enhancements to improve support of recently introduced storage devices with a 4KB physical sector size (commonly referred to as “Advanced Format”). These enhancements include functionality fixes, improved performance, and updated storage drivers which provide applications the ability to retrieve information as to the physical sector size of storage device. More information on these enhancements is detailed in Microsoft KB 982018.

Changes specific to Windows 7 with SP1

Here are the specific changes for Windows 7 SP1

1. Additional support for communication with third-party federation services

Additional support has been added to allow Windows 7 clients to effectively communicate with third-party identity federation services (those supporting the WS-Federation passive profile protocol). This change enhances platform interoperability, and improves the ability to communicate identity and authentication information between organizations.

Improved HDMI audio device performance

A small percentage of users have reported issues in which the connection between computers running Windows 7 and HDMI audio devices can be lost after system reboots. Updates have been incorporated into SP1 to ensure that connections between Windows 7 computers and HDMI audio devices are consistently maintained.

2. Corrected behavior when printing mixed-orientation XPS documents

Prior to the release of SP1, some customers have reported difficulty when printing mixed-orientation XPS documents (documents containing pages in both portrait and landscape orientation) using the XPS Viewer, resulting in all pages being printed entirely in either portrait or landscape mode. This issue has been addressed in SP1, allowing users to correctly print mixed-orientation documents using the XPS Viewer.

Windows 7 and W2008 R2 SP1 released. Important Steps to follow before you install

SP1 Download link : http://www.microsoft.com/downloads/en/details.aspx?FamilyID=c3202ce6-4056-4059-8a1b-3a9b77cdfdda

Before you install Windows 7 SP1 , make sure that you follow these steps:

| STEP 1 : Uninstalling SP1 using Programs and Features |

The easiest way to uninstall SP1 is using Programs and Features.

- Click the Start button

, click Control Panel, click Programs, and then click Programs and Features.

, click Control Panel, click Programs, and then click Programs and Features. - Click View installed updates.

- Click Service Pack for Microsoft Windows (KB 976932), and then click Uninstall.

If you don’t see Service Pack for Microsoft Windows (KB 976932) in the list of installed updates, your computer likely came with SP1 already installed, and you can’t uninstall the service pack. If the service pack is listed but grayed out, you can’t uninstall the service pack.

| Uninstalling SP1 using the Command Prompt |

- Click the Start button

, and then, in the search box, type Command Prompt.

, and then, in the search box, type Command Prompt. - In the list of results, right-click Command Prompt, and then click Run as administrator.

If you’re prompted for an administrator password or confirmation, type the password or provide confirmation.

If you’re prompted for an administrator password or confirmation, type the password or provide confirmation. - Type the following: wusa.exe /uninstall /kb:976932

- Press the Enter key.

Step 2: Back up your important files

Back up your files to an external hard disk, DVD or CD, USB flash drive, or network folder. For information about how to back up your files, see Back up your files (http://windows.microsoft.com/en-US/windows7/Back-up-your-files) .

Update device drivers as necessary. You can do this by using Windows Update in Control Panel or by going to the device manufacturer’s website.

Important If you are using an Intel integrated graphics device, you should be aware that there are known issues with certain versions of the Intel integrated graphics device driver and with D2D enabled applications, such as certain versions of Windows Live Mail. The versions of the Intel integrated graphics device driver that are known to be problematic are Igdkmd32.sys and Igdkmd64.sys versions 8.15.10.2104 through 8.15.10.2141. For more information about a known issue with these drivers and with Windows Live Mail, see Microsoft Knowledge Base article 2505524 (http://support.microsoft.com/kb/2505524) .

To check whether you are using the Intel integrated graphics device driver Igdkmd32.sys or Igdkmd64.sys versions 8.15.10.2104 through 8.15.10.2141, follow these steps:

- Start DirectX Diagnostic Tool. To do this, click Start, type dxdiag in the Search programs and files box, and then press Enter.

- Click the Display tab.

- Note the driver and driver version.

- If you have the Intel integrated graphics driver Igdkmd32.sys or Igdkmd64.sys versions 8.15.10.2104 through 8.15.10.2141, visit the computer manufacturer’s website to see whether a newer driver is available, and then download and install that driver.

Step 4: Install Windows Update KB2454826

Install Windows Update KB2454826 from Windows Update (http://www.update.microsoft.com/) if it is not already installed. If you install the service pack from the Microsoft Download Center and do not install Windows Update KB245862, you could encounter a Stop error in Windows in rare cases.

Windows Update KB2454826 will automatically be installed when you install the service pack by using Windows Update. However, Windows Update KB2454826 is not automatically installed when you install the service pack from the Microsoft Download Center.

To check whether Windows Update KB2454826 is installed, click Start, type View installed updates in the Search programs and files box, and then press Enter. Notice whether Update for Microsoft Windows (KB2454826) is listed. If the update is not listed, you will have to install it from Windows Update

Step 5: Check for malware

Check your computer for malware by using antivirus software. Some antivirus software is sold together with annual subscriptions that can be renewed as needed. However, much antivirus software is also available for free. Microsoft offers Microsoft Security Essentials, free antivirus software that you can download from the Microsoft Security Essentials (http://go.microsoft.com/fwlink/?LinkId=168949) website. You can also visit the Microsoft Consumer security software providers (http://go.microsoft.com/fwlink/?LinkId=135654) webpage to find third-party antivirus software.

Important If your computer is infected with malware and you install Windows 7 SP1, you could encounter blue screens or a Windows Update error such as 8007f0f4 or FFFFFFFF. If malware is detected, Windows Update will be unable to install SP1.

MS Support policy for SQL Server in a virtualization environment

SQL Server 2005/ 2008 / 2008 R2 are supported in the following virtualization environments:

- Windows Server 2008 /R2 with Hyper-V

- Microsoft Hyper-V Server 2008 / R2

- Configurations that are validated through the Server Virtualization Validation Program (SVVP), which includes VmWare and Citrix.

Note The SVVP solution must be running on hardware that is certified for Windows Server 2008 to be considered a valid SVVP configuration.

Restrictions and Limitations

- Guest Failover Clustering is supported for SQL Server 2005 / 2008 / 2008 R2 in a virtual machine provided all of the following requirements are met:

- The OS running in the virtual machine (the “Guest Operating System”) is Windows Server 2008 or higher

- The virtualization environment meets the requirements of Windows 2008 Failover Clustering, as documented in the following Microsoft Knowledge Base article:

943984 (http://support.microsoft.com/kb/943984/ ) The Microsoft Support Policy for Windows Server 2008 Failover Clusters

- Virtualization Snapshots for Hyper-V or any virtualization vendor are not supported to use with SQL Server in a virtual machine. It is possible that you may not encounter any problems when using snapshots and SQL Server, but Microsoft will not provide technical support to SQL Server customers for a virtual machine that was restored from a snapshot.

Is Quick and Live Migration with Windows Server 2008 R2 Hyper-V supported with SQL Server?

Yes, Live Migration is supported for SQL Server 2005, SQL Server 2008, and SQL Server 2008 R2 when using Windows Server 2008 R2 with Hyper-V or Hyper-V Server 2008 R2.Quick Migration, which was introduced with Windows Server 2008 with Hyper-V and Hyper-V Server 2008, is also supported for SQL Server 2005, 2008, and 2008 R2 for Windows Server 2008 with Hyper-V, Windows Server 2008 R2 with Hyper-V, Hyper-V Server 2008, and Hyper-V Server 2008 R2.

For more information : http://support.microsoft.com/kb/956893