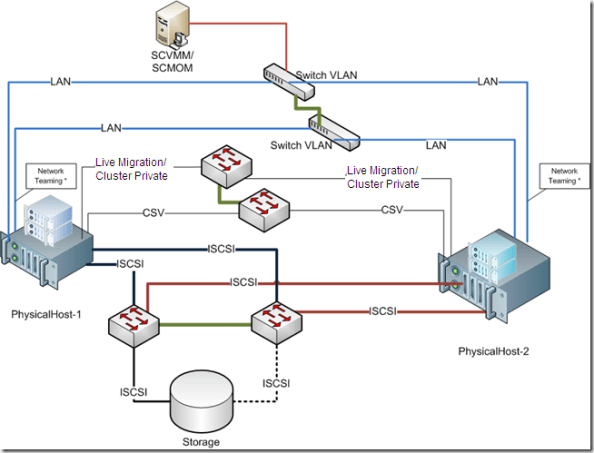

Hyper-V R2 : Storage/Network Design for High Availability

By converting your physical servers to virtual ones, you immediately get extra capabilities that make them less likely to go down and easier to bring back up when they do:

- · Snapshots enable you to go back in time when a software update or configuration change blows up an otherwise healthy server.

- · Virtual hard disks consolidate the thousands of files that comprise a Windows server into a single file for backups, which significantly improves the reliability of those backups.

- · Volume Shadow Copy Service (VSS) support, which is natively available in Hyper-V, means that applications return from a restore with zero loss of data and immediately ready for operation.

- · Migration capabilities improve planned downtime activities by providing a mechanism for relocating the processing of virtual machines to new hosts with little to no disruption in service.

- · Failover clustering means that the loss of a virtual host automatically moves virtual machines to new locations where they can continue doing their job.

What’s become much more critical is that the servers/application/services to keep on working.

To Provide High Availability, we need to design properly our environment. With the right combinations of technology, you can inexpensively increase the availability of your environment.

The best practices are based on the following design principles:

- · Redundant hardware to eliminate a single point of failure

- · Load balancing and failover for iSCSI and network traffic

- · Redundant paths for the cluster, Cluster Shared Volume (CSV), and live migration traffic

- · Separation of each traffic type for security and availability

- · Ease of use and implementation

Remember: Windows Server 2008 R2 Enterprise or Windows Server 2008 R2 Datacenter must be used for the physical computers. These servers must run the same version of Windows Server 2008 R2, including the same type of installation. That is, both servers must be either a full installation or a Server Core installation

Also, Hyper-V requires an x64-based processor, hardware-assisted virtualization, and hardware-enforced Data Execution Prevention (DEP). Specifically, you must enable the Intel XD bit (execute disable bit) or AMD NX bit (no execute bit).

Servers

Server-class equipment. The use of equipment that is not listed in the Windows catalog can impact supportability and may not best meet the needs of your virtual machines. Moving to tested and supported server-class equipment will ensure full support in the case of a problem. ). The Windows Server catalog is available at the Microsoft Web site http://go.microsoft.com/fwlink/?LinkId=111228

iSCSI Storage

I would recommend Dell Equalogic, Compellent, IBM NetApp, EMC, but you should evaluate others vendors.

iSCSI Software

If you need to use software-based iSCSI, look carefully at the features available. Microsoft clustering requires iSCSI to support SCSI Primary Commands-3, specifically the support of Persistent Reservations. Most for-cost iSCSI software currently supports this capability, but there is very little support for it in most open source software packages.

One inexpensive and easy-to-use software package is the StarWind iSCSI Target from StarWind Software. There is a free version of StarWind iSCSI target allowing multiple connections. You cannot get it filling automatic form on their site. You have to ask support@starwindsoftware.com for free NFR unlock key manuallyNetwork

How about the network configuration? Here is my proposal and this is what I am using in terms of NICs/Ports:1 management2 private: 1 for cluster private/CSV primary, 1 for live migration primary2 for network (in teaming)2 for iSCSI2 Dedicated (NIC/Ports) for the Network traffic configured as teaming.The failover cluster should be disabled from managing this network.

Provided by establishing the Hyper-V virtual switch on a network team. The team can provide load balancing, link aggregation, and failover capabilities to the virtual network

NIC teaming is the process of grouping together several physical NICs into one single logical NIC, which can be used for network fault tolerance and transmit load balance. The process of grouping NICs is called teaming. Teaming has two purposes:• Fault Tolerance: By teaming more than one physical NIC to a logical NIC, high availability is maximized. Even if one NIC fails, the network connection does not cease and continues to operate on other NICs.• Load Balancing: Balancing the network traffic load on a server can enhance the functionality of the server and the network. Load balancing within network interconnect controller (NIC) teams enables distributing traffic amongst the members of a NIC team so that traffic is routed among all available paths.2 Dedicated (NIC/Ports) for the CSV. (Minimum 1Gb). I personally recommend 10Gb. One a 2 nodes you can use cross-over, but if you plan to use more, than you need a switch. If you choose 10GB it means that your switch needs to be 10GB.

A feature of failover clusters called Cluster Shared Volumes is specifically designed to enhance the availability and manageability of virtual machines. Cluster Shared Volumes are volumes in a failover cluster that multiple nodes can read from and write to at the same time. This feature enables multiple nodes to concurrently access a single shared volume.CSV will provide many benefits, including easier storage management, greater resiliency to failures, the ability to store many VMs on a single LUN and have them fail over individually, and most notably, CSV provides the infrastructure to support and enhance live migration of Hyper-V virtual machines.Cluster private traffic will flow over the private network with the lowest cluster metric (typically has value of 1000). To view the cluster network metrics that have been assigned, run the following PowerShell command:

To view the cluster network metric settings, run the following Power Shell commands:Import-Module FailoverClusters

Get-ClusterNetwork | ft Name, Metric, AutoMetricIf the automatically assigned metrics are not the desired values, then the following Power Shell commands can be executed to manually set the metric values:Get-ClusterNetwork | ft Name, Metric, AutoMetricNote the name of the networks that you want to set the values on (used for next command)$cn = Get-ClusterNetwork “<cluster network name>”

$cn.Metric = <value>Cluster private/CSV should have a value of 1000

Live migration should have a value of 11002 Dedicated (NIC/Ports) for the iSCSI traffic.( Minimum 1Gb). I personally recommend 10Gb ( the difference in price will be about 10% more).Btw, remember: If you choose 10GB it means that your switch needs to be 10GB, also the Storage.

The mass-storage device controllers that are dedicated to the cluster storage should be identical. They should also use the same firmware version.Isolating iSCSI traffic to its own network path isolates that traffic to its own network segment, ensuring its full availability as network conditions change.A multipath I/O software needs to be installed on the Hyper-V hosts to manage the disks properly. This is done by first enabling Hyper-V-based MPIO support which is not installed by default.Also, Enable Jumbo frames on the two interfaces identified for iSCSI1 (NIC/Port) for the Management. External management applications (SCVMM, DMC, Backup/Restore, etc) communicate with the cluster through this network.Resuming :

Hi,

great post about our software! Your feedback is very much appreciated. One correction so far… There *IS* free version of StarWind iSCSI target allowing multiple connections. You cannot get it filling automatic form on our site. You have to ask support@starwindsoftware.com for free NFR unlock key manually. And another little tricky thing… We’re StarWind Software, spin-off from Rocket Division Software since late 2008 🙂

Good luck and happy clustering!

Anton Kolomyeytsev

CTO, StarWind Software

Proud member of iSCSI SAN community.